Reduced connector development time by 85% (from 2 weeks to 3 days) while scaling support to 35+ data sources, 35+ destinations, 10+ AI model providers, and 65+ file types within a single modular platform.

Meet our client

Client:

Industry:

Market:

Technology:

In a Nutshell

Client’s Challenge

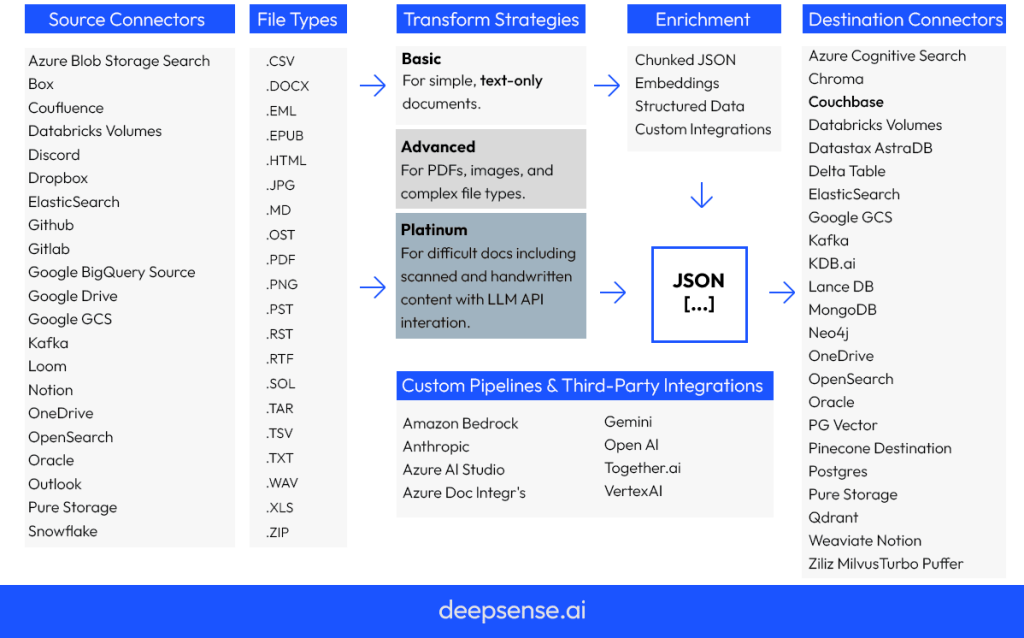

The client’s data processing platform needed to integrate with 35+ data sources, 35+ destinations, and 10+ AI model providers across 65+ file types – each with different APIs, rate limits, and security requirements. Handling unstructured data (PDFs, images, Word docs) efficiently was essential to meet enterprise-grade demands across AWS, Azure, and on-prem deployments.

Our Solution

We built a modular plugin architecture and orchestration layer that standardized integrations and accelerated connector development through a lightweight SDK and stable interfaces. The solution included Kubernetes orchestration, GitOps (ArgoCD), and secure isolation via Keycloak and AWS Secrets Manager, ensuring scalability, safety, and observability.

Client’s Benefits

Connector release time dropped from 2 weeks to just 3 days, enabling rapid expansion of integrations and faster go-to-market for new clients. Developer satisfaction and onboarding speed improved significantly.

A Deep Dive

Overview

The client operates a large-scale platform designed to ingest, process, and enrich unstructured documents using AI. To remain competitive and address growing customer demands, the platform needed to rapidly expand its ecosystem of connectors and AI model integrations without increasing operational complexity or compromising security.

Client

A technology provider delivering an enterprise-grade data processing and AI platform used by organizations that work extensively with unstructured data. The platform integrates dozens of external systems and AI model providers to enable document ingestion, extraction, enrichment, and delivery at scale.

Challenge

The client’s platform needed to process large volumes of unstructured documents—PDFs, images, Word files, and other formats—originating from diverse sources and destined for multiple downstream systems.

To meet customer demand, the platform had to support:

- 35+ data sources

- 35+ output destinations

- 10+ AI model providers

- 65+ document and file types

Each new integration required significant engineering effort, slowing time to market and limiting scalability. Building a single new connector could take up to two weeks, making it difficult to respond quickly to customer needs and expand the platform’s ecosystem.

Key Constraints

Several factors significantly increased implementation complexity:

- Wide variability in document structures across 65+ file types

- Integration with multiple AI providers (e.g. OpenAI, Anthropic, Amazon Bedrock, Vertex AI, VoyageAI), each exposing different APIs and rate limits

- Enterprise-grade deployment requirements across AWS, Azure, and on-prem environments

- Secure handling of sensitive documents, including authentication, secrets management, and auditability

- High-throughput processing without timeouts or performance degradation

The solution needed to be both highly extensible and operationally robust.

Solution

deepsense.ai designed and implemented a modular plugin architecture with a centralized orchestration layer for the client’s ETL platform.

The solution focused on four core principles:

Modular extensibility

- Standardized interfaces for data connectors and AI model providers

- Clear extension points supported by a lightweight SDK

- Minimal boilerplate to accelerate development of new plugins

Operational safety and isolation

- Strict plugin isolation and role-based access control

- Secure secrets management and authentication flows

- Full audit logging for enterprise compliance requirements

Scalable orchestration

- Unified handling of rate limits across multiple AI providers

- Efficient processing of large document volumes

- Stable abstractions enabling predictable behavior across deployments

Developer experience

- Centralized configuration and monitoring

- Consistent patterns across ingestion, processing, and delivery

- Faster onboarding for new engineers and contributors

As a result, the time required to build and release a new connector was reduced from two weeks to three days.

Technology Stack

Languages & Frameworks

- Python (FastAPI, Pydantic)

- TypeScript (Remix, React)

Orchestration & Deployment

- Kubernetes (K3s, EKS, AKS)

- Helm, Kustomize, custom Kubernetes operators

- ArgoCD (GitOps)

Infrastructure & Containers

- AWS CDK, Pulumi

- Docker

- Amazon ECR, Azure Container Registry

AI & ML

- OpenAI

- Anthropic Claude

- Amazon Bedrock

- Vertex AI

- VoyageAI

- Custom model integrations

Data & Messaging

- PostgreSQL

- Redis

- NATS message bus

Security

- Keycloak

- AWS Secrets Manager

Observability

- OpenTelemetry

- CloudWatch

- Elasticsearch

- Grafana Alloy

What Made the Solution Unique

Unlike traditional monolithic integration layers, the platform was designed as a plugin-first system with strong isolation and ownership boundaries. This enabled rapid development without sacrificing security, stability, or operational clarity.

Key differentiators included:

- A dedicated plugin registry

- A custom prompt management system for AI models

- Uniform abstractions spanning ingestion, AI processing, and delivery

Results

Following production rollout, the client achieved measurable business and engineering impact:

- Connector development time reduced from 2 weeks to 3 days

- Faster onboarding and higher developer satisfaction

- Out-of-the-box support for:

- 35+ data sources

- 35+ destinations

- 10+ AI model providers

- 65+ file types

- Expanded platform capabilities enabled new customer use cases

- Increased qualified leads and steady growth in enterprise deployments

Summary

By introducing a modular, plugin-based architecture with standardized interfaces and centralized orchestration, deepsense.ai enabled the client to dramatically accelerate integration development, improve developer experience, and unlock faster platform growth—transforming connector delivery from a bottleneck into a scalable competitive advantage.