Table of contents

With the growing importance and popularity of social media, the application of natural language processing techniques to this domain is becoming an increasingly trendy topic among NLP researchers. As part of an R&D project aiming to contribute to the advancement of Polish NLP, deepsense.ai experts have introduced TrelBERT, a language model for Polish social media which has achieved outstanding results in detecting cyberbullying.

Table of contents

“We actively follow the latest international developments in the field of NLP and, as part of the R&D project, our team has decided to take up the challenge and support the advancement of Polish NLP. Working on TrelBERT not only brought us a great deal of satisfaction, but also allowed us to learn how to handle the rather informal language used on social media. When examining the resulting model, we discovered that it knows a lot about Polish sports and politics – topics frequently discussed online by humans” said Artur Zygadło, Lead Data Scientist at deepsense.ai.

The typical approach to training modern NLP models is so-called transfer learning, which assumes the development of one large NLP model that captures general knowledge about the language, and can then be trained to perform various specific tasks. The starting point for the experiments was HerBERT, a solution previously published by Allegro – the company behind the largest e-commerce platform in Poland. Their model already knew the general rules, words and their meanings in different contexts in the Polish language, which it learned by “studying” texts from various sources: CommonCrawl, the National Corpus of Polish, movie subtitles or Wikipedia. What it did not know about was the language of social media, which differs significantly from texts in the resources listed above. And this is what deepsense.ai’s team taught it.

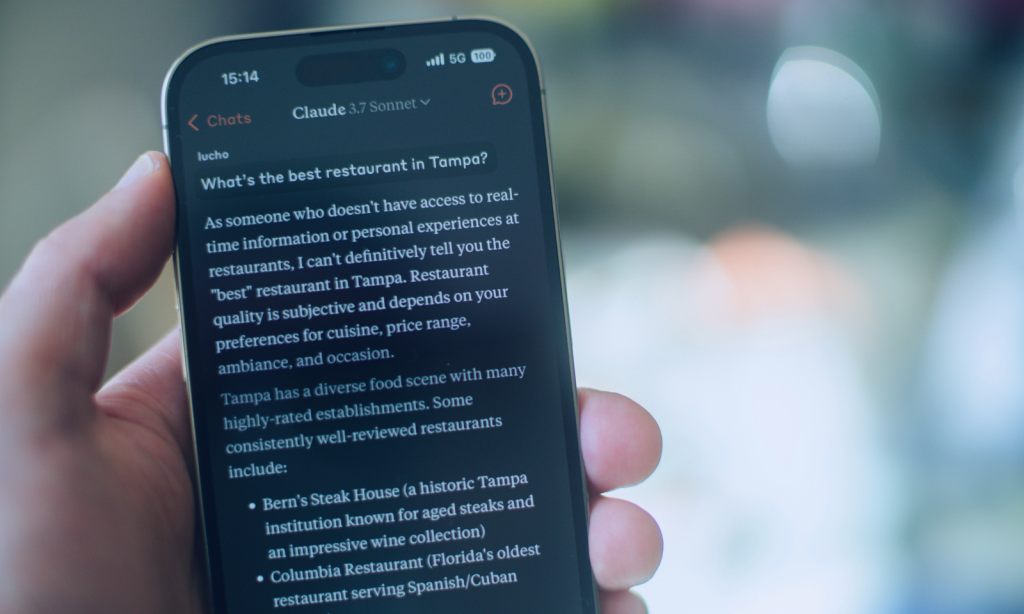

The model created by deepsense.ai utilized HerBERT and was further trained using almost 100 million messages extracted from Polish Twitter. The decision was made to name the model TrelBERT, as “trel” is a Polish word describing the sound made by a bird (referring to “tweet”). To evaluate TrelBERT against the tasks included in the Polish NLP, a benchmark called KLEJ (analogous to the famous English GLUE benchmark) was used. One of the tasks that was especially interesting was one which served the purposes of cyberbullying detection.

The goal was to prepare a solution capable of determining whether a given Tweet is harmful or not. In this particular task, thanks to being trained on Twitter texts, the model surpassed all the other competitors and is currently holding the top position on the KLEJ leaderboard (in the “CBD” column – results for cyberbullying detection).

“It is no surprise to me that our model has achieved the best results in the cyberbullying detection task. This problem was born on social media and only exists there. We suspect that our model would beat the competition in other social media-related tasks, where content is spontaneous and generated by users, such as sentiment analysis or emotion recognition. Unfortunately, the Polish NLP community lacks such datasets” said Wojciech Szmyd, Data Scientist at deepsense.ai.

Apart from submitting the model’s results to KLEJ, deepsense.ai made TrelBERT available in the popular Hugging Face repository of models. You can give the model a try and read more about it here.