Table of contents

Table of contents

Throughout history, tackling pandemics has always been about using the latest knowledge and approaches. Today, with AI-powered solutions, healthcare has new tools to tackle present and future challenges, and the COVID-19 pandemic will prove to be a catalyst of change.

It was probably a typical October day in Messina, a Sicilian port, when 12 genoese ships docked. People were horrified to discover the dead bodies of sailors aboard, and with them the entrance of the black death to Europe. Today, in the age of vaccines and advanced medical treatments, the specter of a pandemic may until recently have seemed a phantom menace. But the COVID pandemic has proved otherwise.

There are currently several challenges regarding the COVID, including symptoms that can be easily mistaken with those of the common flu. An X-ray or CT image of lungs is a key element in the diagnosis and treatment of COVID 19 – the disease produces several telltale signs that are easy for trained professionals to spot. Or a trained neural network.

Source: researchgate.net

A neural network can see details the average observer cannot, and even specialists would be hard-pressed to find. But such skill requires a significant amount of training and a good dataset.

Source: researchgate.net

A neural network can see details the average observer cannot, and even specialists would be hard-pressed to find. But such skill requires a significant amount of training and a good dataset.

As we gained more contact and experience with medical data, our results improved, and after some time we were able to take on challenges such as producing an algorithm that could automatically detect nuclei. With images acquired under a variety of conditions and having different cell types, magnification, and imaging modality (brightfield vs. fluorescence), the main challenge was to ensure the ability to generalise across these conditions.

Another interesting project we did involved automatic stomatological assessment. We trained a model to read an x-ray image and detect and identify teeth, accessories and lesions including laces, implants, cavities, cavity fillings, and parodontosis, among a long list of others. In yet another project, we estimated minimum (end-systolic) and maximum (end-diastolic) volumes of the left ventricle from a set of MRI-images taken over one heartbeat. Our results were rated “excellent” by cardiologists that reviewed our work.

Move your mouse cursor over the image to see the difference.

[image-comparator left=”28912″ left_alt=”Lewy alt” right=”28913″ right_alt=”Prawy alt” classes=”hover” title=][/image-comparator]

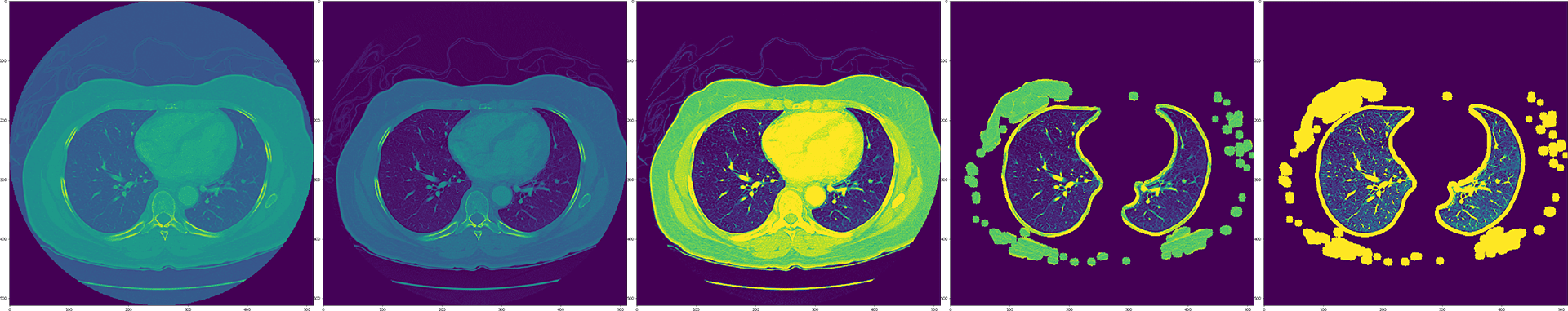

The standardized formats used in medical imaging allow for better transfer of knowledge in modeling different problems. In a recent research project we explored the potential of image preprocessing of CT scans in DICOM format.

As we gained more contact and experience with medical data, our results improved, and after some time we were able to take on challenges such as producing an algorithm that could automatically detect nuclei. With images acquired under a variety of conditions and having different cell types, magnification, and imaging modality (brightfield vs. fluorescence), the main challenge was to ensure the ability to generalise across these conditions.

Another interesting project we did involved automatic stomatological assessment. We trained a model to read an x-ray image and detect and identify teeth, accessories and lesions including laces, implants, cavities, cavity fillings, and parodontosis, among a long list of others. In yet another project, we estimated minimum (end-systolic) and maximum (end-diastolic) volumes of the left ventricle from a set of MRI-images taken over one heartbeat. Our results were rated “excellent” by cardiologists that reviewed our work.

Move your mouse cursor over the image to see the difference.

[image-comparator left=”28912″ left_alt=”Lewy alt” right=”28913″ right_alt=”Prawy alt” classes=”hover” title=][/image-comparator]

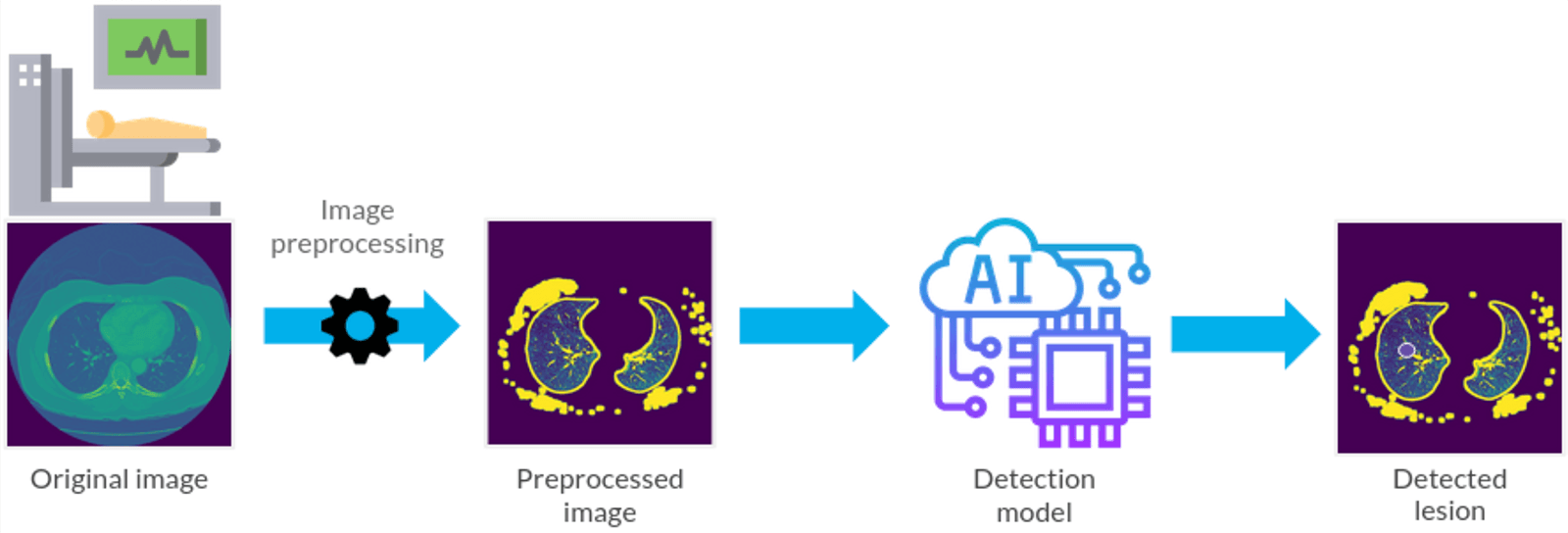

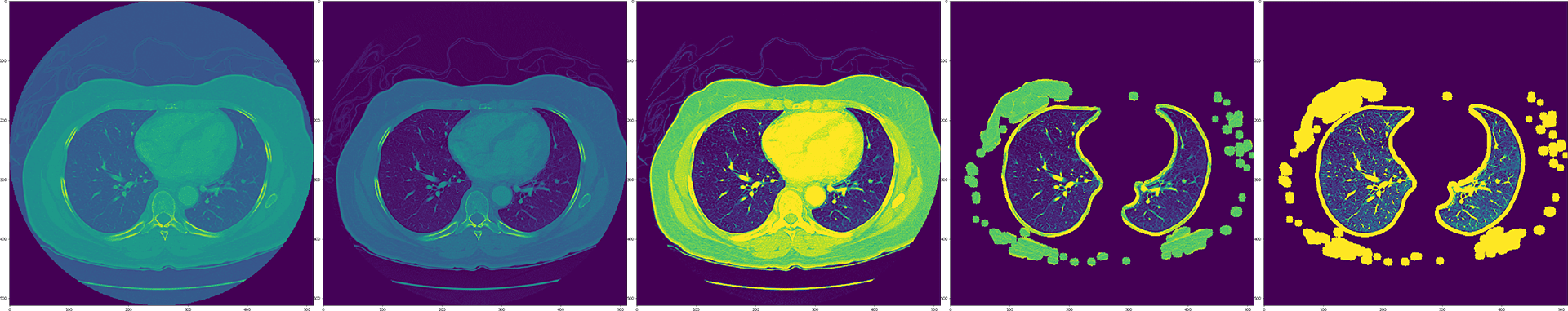

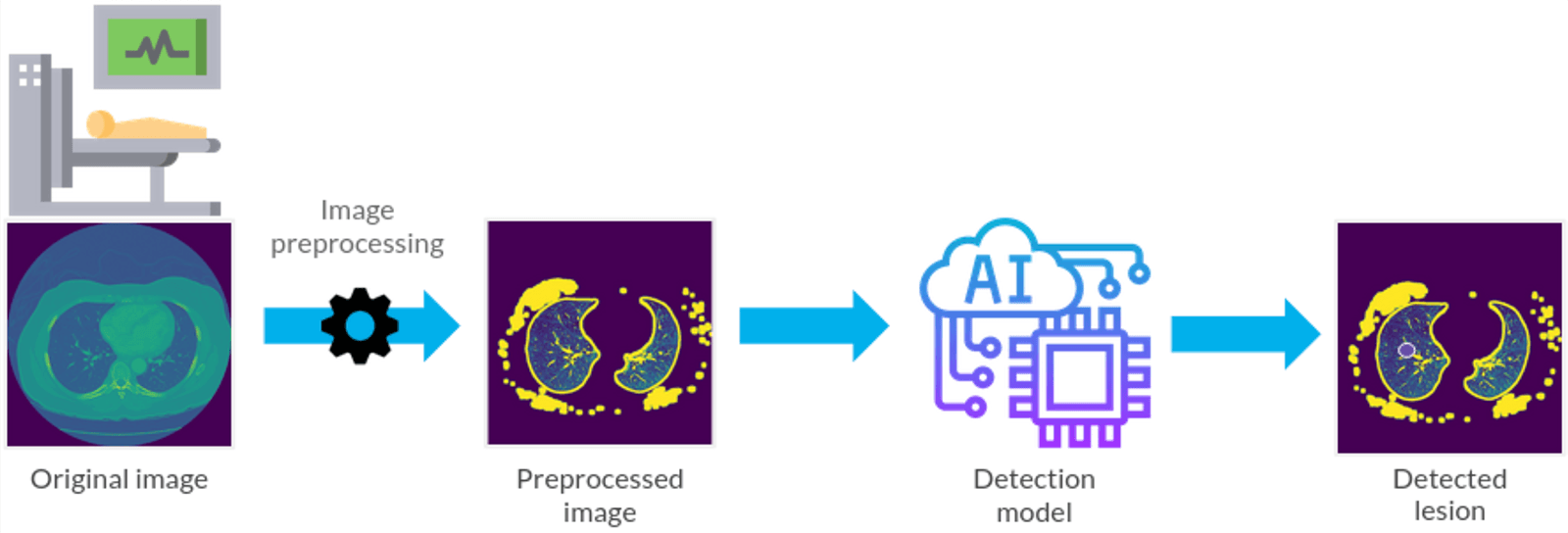

The standardized formats used in medical imaging allow for better transfer of knowledge in modeling different problems. In a recent research project we explored the potential of image preprocessing of CT scans in DICOM format.

Image preprocessing is a vital aspect of computer vision projects. Developing the optimal procedure rests upon the team’s experience in similar projects as well as their ability to explore new ideas. In this case the specialized image preprocessing methods we developed made the image more readable for the model and boosted its performance by 20%.

Image preprocessing is a vital aspect of computer vision projects. Developing the optimal procedure rests upon the team’s experience in similar projects as well as their ability to explore new ideas. In this case the specialized image preprocessing methods we developed made the image more readable for the model and boosted its performance by 20%.

Neural networks- a building block for medical AI analysis

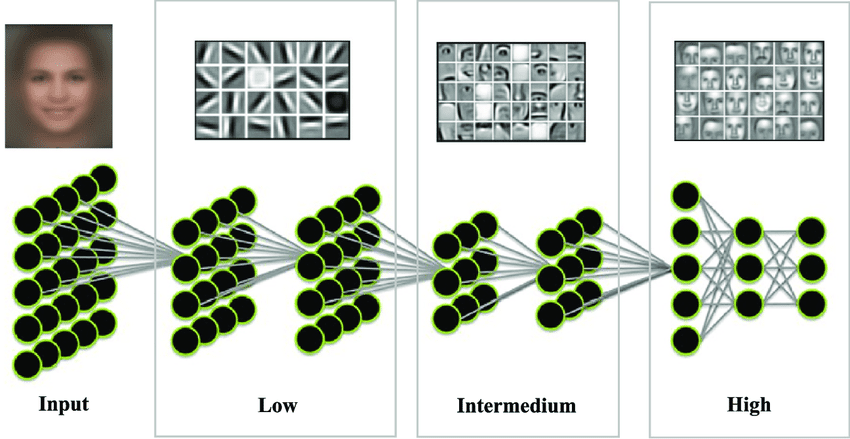

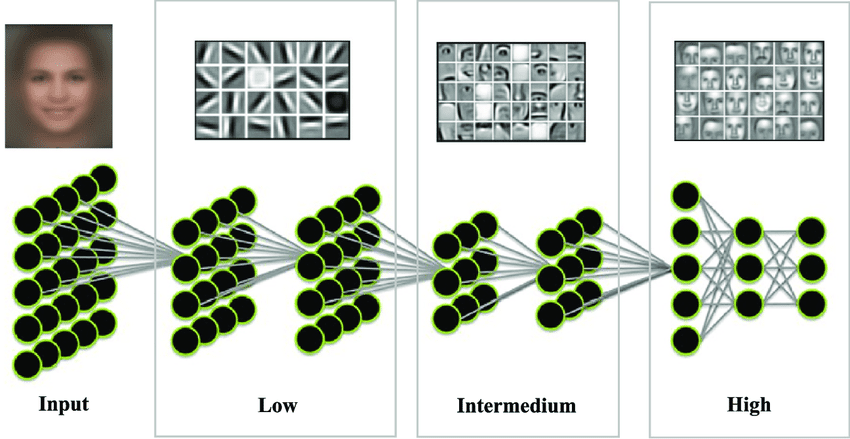

Computer scientists have traditionally developed methods that let them find keypoints on images based on defined heuristics, which allow them to tackle a huge array of problems. For example, locating machine parts on a uniform conveyor belt where simple colour filtration differentiates them from the background. But this is not the case for more sophisticated problems, where extensive domain knowledge is required. Enter Neural Networks, algorithms inspired by the mathematical model of how the human brain processes signals. In the same way as humans gain knowledge by gathering experience, Neural Networks process data and learn on their own, instead of being manually tuned. In AI-powered image processing, every pixel is represented as an input node and its value is passed to neurons in the next layer, allowing the interdependencies between pixels to be captured. As seen in the face detection model below, the lower layers develop the ability to filter simple shapes like edges and corners (e.g., eye corners) or color gradients. These are then used by intermediate layers to construct more sophisticated shapes representing the parts of the objects being analysed (in this case eyes, parts of lips or a lung edge etc.). The high layers analyse recognised parts and classify them as specific objects. In the case of X-ray images, such objects may be a rib, a lung or an irrelevant object in the background. Source: researchgate.net

A neural network can see details the average observer cannot, and even specialists would be hard-pressed to find. But such skill requires a significant amount of training and a good dataset.

Source: researchgate.net

A neural network can see details the average observer cannot, and even specialists would be hard-pressed to find. But such skill requires a significant amount of training and a good dataset.

What does it take to train neural networks?

Data scientists spend a lot of time ensuring their models have the ability to generalise, and can thus deliver accurate predictions from data they didn’t encounter during training. This requires vast knowledge of data preprocessing and augmentation techniques, state-of-the-art network architectures and error-interpreting skills. The iterative process of designing and executing experiments is also both very time- and computing power-consuming and requires good organisation if it is to be done efficiently. Under these conditions, high prediction accuracy is hard to achieve – deepsense.ai’s teams have been developing this ability for 7 years. The key difference between a human specialist and a neural network is that the latter is completely domain-agnostic. An algorithm that excelled in Segmenting satellite images or recognising individual North Atlantic right whales from a population of 447 of North Atlantic right whales can just as well be used for medical image recognition after tuning.AI in medical data

Numerous AI solutions are currently used in medicine: from appointments and digitization of medical records to drug dosing algorithms (applications of artificial intelligence in health care). However, doctors still have to perform painstaking and repetitive tasks e.g. by analyzing images. Images are used across the field of medicine, but they play a particularly important role in radiology. According to IBM estimates, up to 90% of all medical data is in image form, be it x-rays, MRIs or most other output from a diagnostic device. That is why radiology as a field is so open to using new technologies. Computers initially used in clinical imaging for administrative work, such as image acquisition and storage, are now becoming an indispensable element of the work environment at the beginning of the image archiving and communication system. Recently, deep learning has been used with great success in medical imaging thanks to its ability to extract features. In particular, neural networks have been used to detect and differentiate bacterial and viral pneumonia in childrens’ chest radiographs). COVID appears to be a similar case. Studies show that 86% of Covid-19 patients have ground-glass opacities (GGO), 64% have mixed GGO and consolidation and 71% have vascular enlargement in the lesion. This can be observed on CT scans as well as chest X-ray images and can be relatively easily spotted by a trained neural network. There are several advantages of CT and x-ray scans when it comes to diagnosing COVID-19. The speed and noninvasiveness of these methods make them suitable for assisting doctors in determining the development of the infection and making decisions regarding performance of invasive tests. Also, due to the lack of both vaccines and medications, immediately isolating the infected patient is the only way to prevent the spread of the disease.How deepsense.ai already supports healthcare

deepsense.ai’s first foray into medical data was when we took part in a competition to classify the severity of diabetic retinopathy using images of retinas. The contestants were given over 35,000 images of retinas, each having a severity rating. There were 5 severity classes, and the distribution of classes was fairly imbalanced. Most of the images showed no signs of disease. Only a few percent had the two most severe ratings. After months of hard work, we took 6th place. As we gained more contact and experience with medical data, our results improved, and after some time we were able to take on challenges such as producing an algorithm that could automatically detect nuclei. With images acquired under a variety of conditions and having different cell types, magnification, and imaging modality (brightfield vs. fluorescence), the main challenge was to ensure the ability to generalise across these conditions.

Another interesting project we did involved automatic stomatological assessment. We trained a model to read an x-ray image and detect and identify teeth, accessories and lesions including laces, implants, cavities, cavity fillings, and parodontosis, among a long list of others. In yet another project, we estimated minimum (end-systolic) and maximum (end-diastolic) volumes of the left ventricle from a set of MRI-images taken over one heartbeat. Our results were rated “excellent” by cardiologists that reviewed our work.

Move your mouse cursor over the image to see the difference.

[image-comparator left=”28912″ left_alt=”Lewy alt” right=”28913″ right_alt=”Prawy alt” classes=”hover” title=][/image-comparator]

The standardized formats used in medical imaging allow for better transfer of knowledge in modeling different problems. In a recent research project we explored the potential of image preprocessing of CT scans in DICOM format.

As we gained more contact and experience with medical data, our results improved, and after some time we were able to take on challenges such as producing an algorithm that could automatically detect nuclei. With images acquired under a variety of conditions and having different cell types, magnification, and imaging modality (brightfield vs. fluorescence), the main challenge was to ensure the ability to generalise across these conditions.

Another interesting project we did involved automatic stomatological assessment. We trained a model to read an x-ray image and detect and identify teeth, accessories and lesions including laces, implants, cavities, cavity fillings, and parodontosis, among a long list of others. In yet another project, we estimated minimum (end-systolic) and maximum (end-diastolic) volumes of the left ventricle from a set of MRI-images taken over one heartbeat. Our results were rated “excellent” by cardiologists that reviewed our work.

Move your mouse cursor over the image to see the difference.

[image-comparator left=”28912″ left_alt=”Lewy alt” right=”28913″ right_alt=”Prawy alt” classes=”hover” title=][/image-comparator]

The standardized formats used in medical imaging allow for better transfer of knowledge in modeling different problems. In a recent research project we explored the potential of image preprocessing of CT scans in DICOM format.

Image preprocessing is a vital aspect of computer vision projects. Developing the optimal procedure rests upon the team’s experience in similar projects as well as their ability to explore new ideas. In this case the specialized image preprocessing methods we developed made the image more readable for the model and boosted its performance by 20%.

Image preprocessing is a vital aspect of computer vision projects. Developing the optimal procedure rests upon the team’s experience in similar projects as well as their ability to explore new ideas. In this case the specialized image preprocessing methods we developed made the image more readable for the model and boosted its performance by 20%.