Explore all the technology expertise we have to develop AI solutions

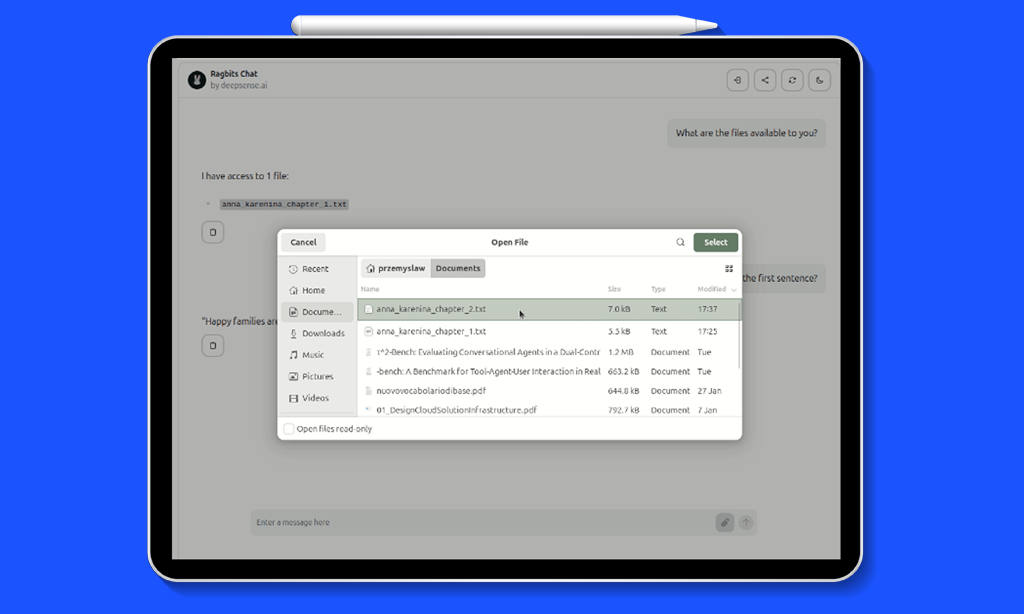

Deploy Agentic RAG Pipelines in Minutes with ragbits

Get to know us, our leadership, development direction, and why we call ourselves applied AI experts.

Look at our open positions and join the applied AI revolution!

With experience across industries,

we deliver impactful projects in these key sectors.

Applied AI Experts Blog

Explore in-depth insights on LLMs, RAG, AI agents, MLOps, Computer Vision, Edge Solutions, Predictive Analytics, and beyond—delivering value-packed perspectives for both business leaders and developers.

-

deepsense.ai Launches Its Google Cloud Partnership with a Dedicated Gemini Enterprise Practice

-

When Code Gets Cheaper, Judgment Gets More Precious: Quality Bottlenecks in Enterprise AI Systems

-

GxP-Compliant AI Deployment. The New Competitive Edge in Life Sciences

-

Early Access to OpenAI’s Agent Execution Layer: What It Means for Enterprise AI Implementation

-

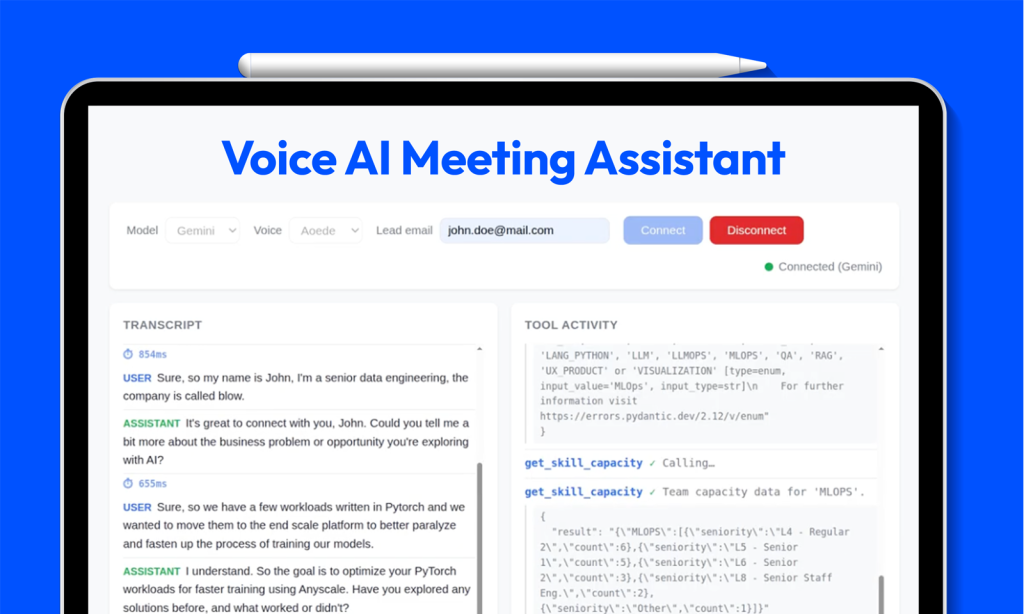

Realtime Voice AI in the Enterprise: Overcoming Latency with Native Audio Models

-

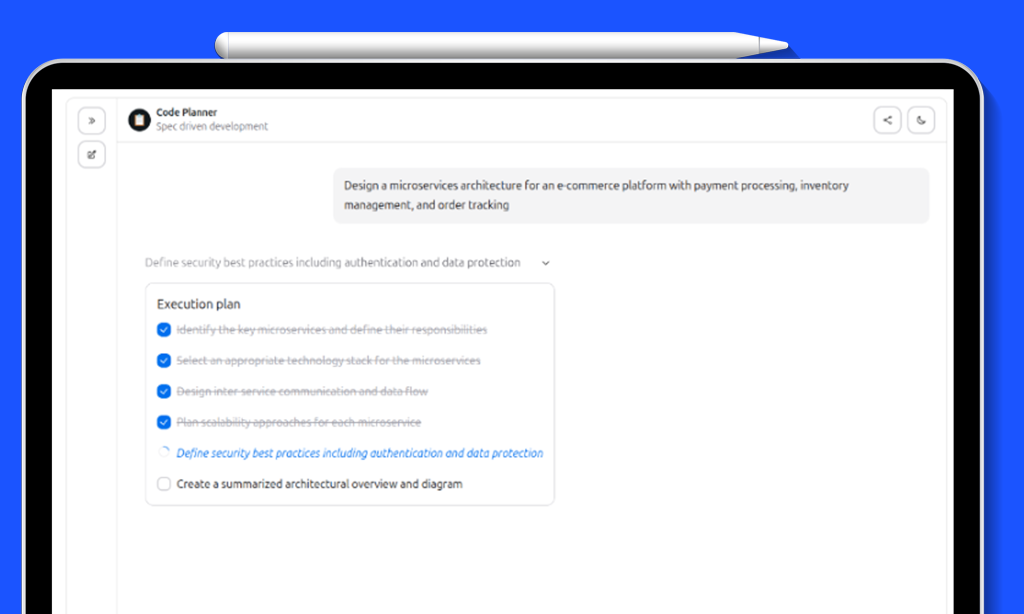

Task Planning, Execution Visibility, and Persistent Memory for AI Agents: ragbits 1.6 release

-

Building Production AI Agents: Hooks, Tool Confirmation, and Multi-Agent Orchestration in ragbits 1.5 release

-

Orchestration Before Optimization: How to Build Enterprise Agents That Survive UAT

-

OAuth2, Extensible API Schema, and File Handling for Production-Grade GenAI: ragbits 1.4 release

-

From Token Prediction to World Models: The Architectural Evolution After LLMs

-

Building MCPs for Regulated Industries: Lessons from Production AI in Life Sciences

-

Coordinate or Collapse: Why Enterprise Agentic Systems Break at Scale

-

Latency Kills Voicebots Faster Than Bad Models

-

From Pilots to P&L: The 12 Factors That Determine Agentic AI ROI

-

Inside the Minds of CTOs: Why We Built an Enterprise LLM Adoption Report for 2025/26

-

AI Advisory Perspective for 2026. What We Learned After the OpenAI Dinner

-

deepsense.ai becomes Anthropic’s Service Partner

-

MCP in the Enterprise: Real Security Risks and How Developers Can Mitigate Them

-

Award-Winning Enterprise AI Advisory: deepsense.ai Recognized at European AI Awards

-

Understanding the Model Context Protocol: How Developers Can Build Secure, Cross-Model AI Integrations for Claude, ChatGPT, Cursor and GithubCopilot

-

Why 20% of ‘Chest X-Rays’ Are Hands – and What That Means for Medical AI