Explore all the technology expertise we have to develop AI solutions

CTO Exclusive Survey:

2025 for LLMs x Applied AI

Get to know us, our leadership, development direction, and why we call ourselves applied AI experts.

Look at our open positions and join the applied AI revolution!

With experience across industries,

we deliver impactful projects in these key sectors.

Applied AI Experts Blog

Explore in-depth insights on LLMs, RAG, AI agents, MLOps, Computer Vision, Edge Solutions, Predictive Analytics, and beyond—delivering value-packed perspectives for both business leaders and developers.

-

AI in Customer Service: How RAG and LLMs Are Transforming Support at Scale

-

Standardizing AI Agent Integration: How to Build Scalable, Secure, and Maintainable Multi-Agent Systems with Anthropic’s MCP

-

Your Next Junior Developer is an Agent. Coding Tasks, PRs & Tests — Automated

-

AutoML in 15 Minutes. From Hypothesis to Production-Ready Model

-

LLM Inference Optimization: How to Speed Up, Cut Costs, and Scale AI Models

-

Browser AI Automation: Can LLMs Really Handle the Mundane? From Lunch Orders to Complex Workflows

-

Region of Interest Pooling and Region of Interest Align explained

-

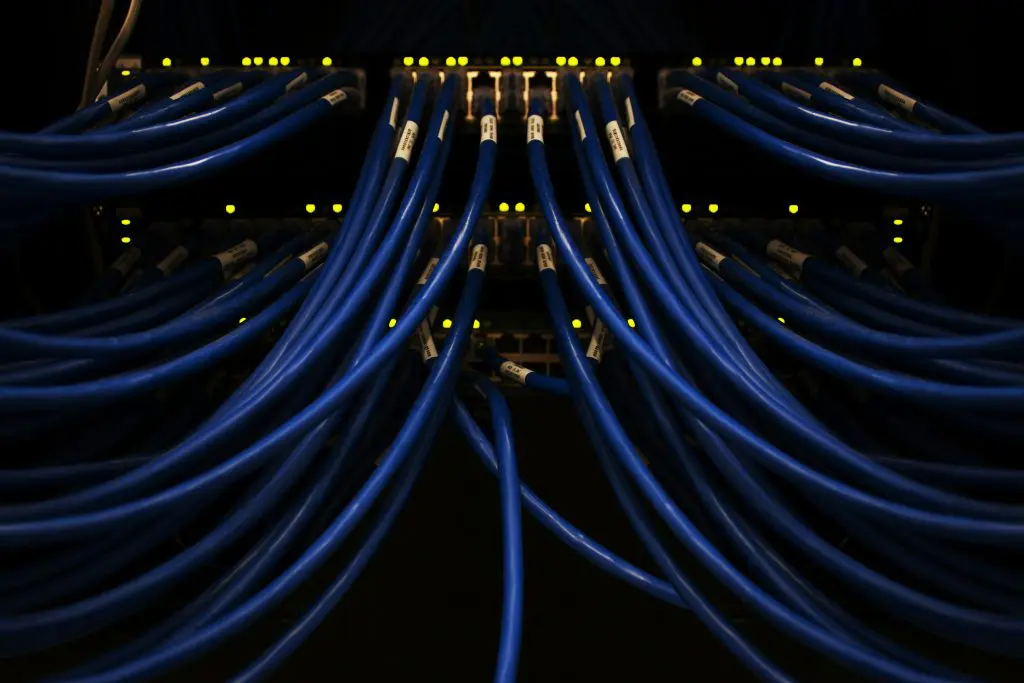

Crusoe and deepsense.ai Forge Partnership to Elevate AI Infrastructure and Efficiency

-

deepsense.ai and Vespa Partnering to Accelerate Enterprise AI with Scalable, Data-Driven AI Applications

-

LLM Inference as-a-Service vs. Self-Hosted: Which is Right for Your Business

-

AI Agents Beyond the Hype – How They’re Powering the Uncool but Essential Use Cases

-

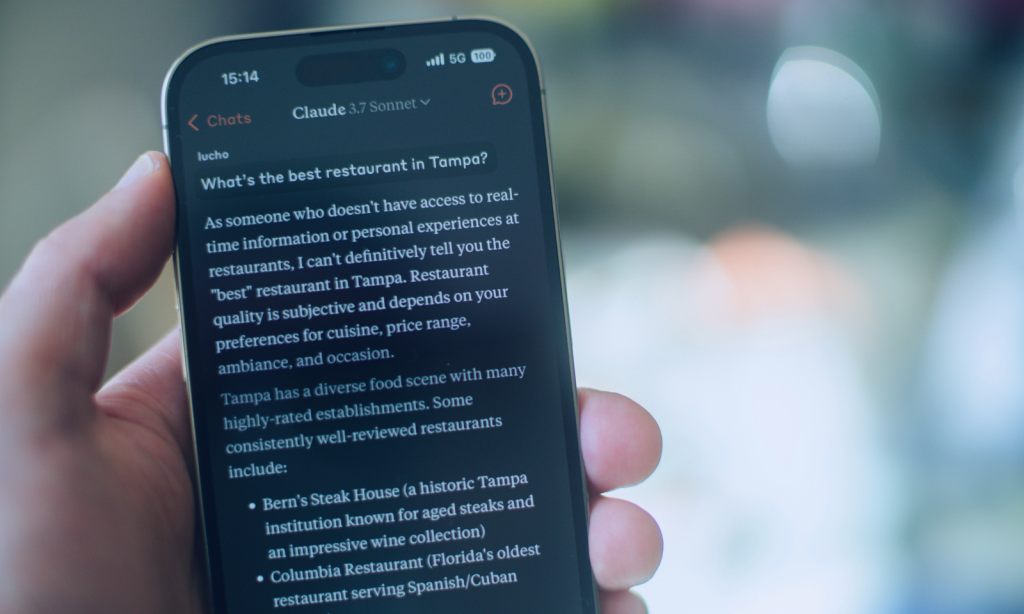

Hallucinating Reality. An Essay on Business Benefits of Accurate LLMs and LLM Hallucination Reduction Methods

-

Does Your Model Hallucinate? Tips and Tricks on How to Measure and Reduce Hallucinations in LLMs

-

Evaluations, Limitations, and the Future of Web Agents – WebGPT, WebVoyager, Agent-E

-

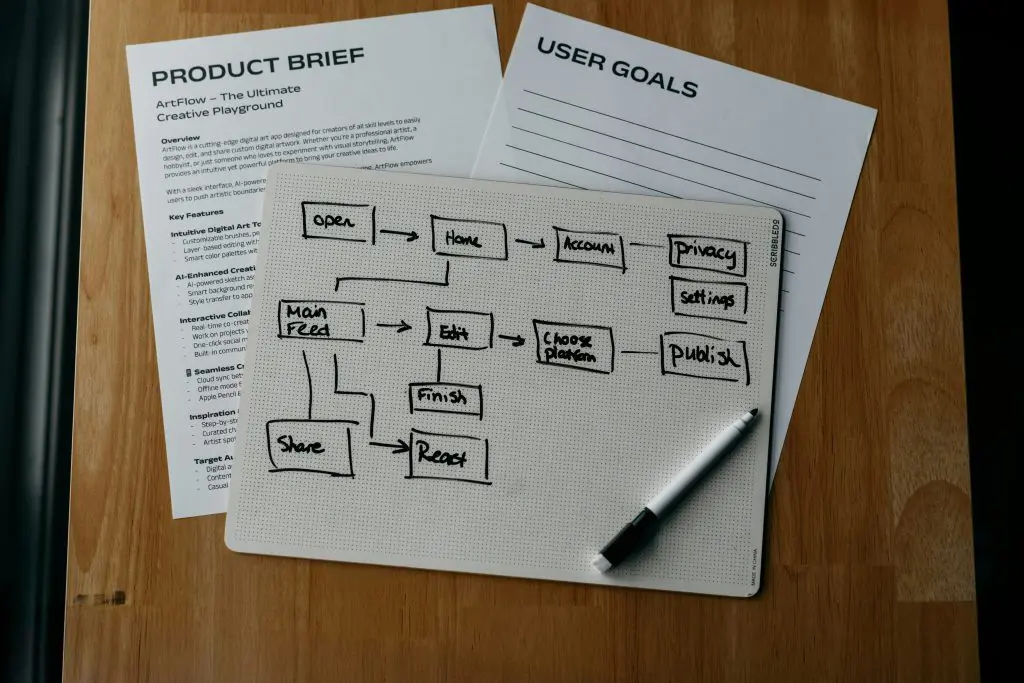

Why and How to Build AI Agents for LLM Applications

-

AI Copilot’s Impact on Productivity in Revolutionizing Ada Language Development

-

Optimizing Computational Resources for Machine Learning and Data Science Projects: A Practical Approach

-

Implementing Small Language Models (SLMs) with RAG on Embedded Devices Leading to Cost Reduction, Data Privacy, and Offline Use

-

From LLMs to RAG. Elevating Chatbot Performance. What is the Retrieval-Augmented Generation System and How to Implement It Correctly?

-

Reducing the cost of LLMs with quantization and efficient fine-tuning: how can businesses benefit from Generative AI with limited hardware?

-

Achieving accurate image segmentation with limited data: strategies and techniques