Exploration of data from iPhone motion coprocessor

During the Christmas break I met my brother-in-law who is an ultimate gadgeteer (an excellent trait for brother). He told me that most iPhones have build-in motion coprocessor and by default they are counting steps. No need to turn on anything, it is working all the time (assuming that the phone is with you).

For phones 5s and up, if you have ‘Health’ application then you can easily check if your activity is being tracked.

Short googling and it turns our that the measurements are quite accurate (see wired magazine, the error is smaller than 1%). In some articles authors even proof that this method is more accurate that other fitness trackers.

As you can imagine, we spend rest of the evening trying to load this data to R and doing some data exploration. You can do this easily by yourself. To export the data from the phone just follow these instructions.

Data will be exported in a single xml file. Just send it by email and we can start our adventuRe.

First, let’s load the data to R

require(XML)

data <- xmlParse("export.xml")

xml_data <- xmlToList(data)

The app is measuring number of steps, distance, and some other parameters. Let’s extract only the number of steps.

xml_dataSel <- xml_data[sapply(xml_data, function(x) (!is.null(x["type"])) & x["type"]== "HKQuantityTypeIdentifierStepCount")]

It’s a list of vectors. A single record looks like that:

# $Record # type sourceName # "HKQuantityTypeIdentifierStepCount" "Kontrprzyklad" # unit creationDate # "count" "2015-02-19 07:42:27 +0100" # startDate endDate # "2015-02-18 18:50:32 +0100" "2015-02-18 18:50:35 +0100" # value # "7"

It will be much easier to work with this data in a format of a single data frame. So small listwise extraction

xml_dataSelDF <- data.frame( unit=unlist(sapply(xml_dataSel, function(x) x["unit"])), day=substr(unlist(sapply(xml_dataSel, function(x) x["startDate"])),1,10), hour=substr(unlist(sapply(xml_dataSel, function(x) x["startDate"])),12,13), start=unlist(sapply(xml_dataSel, function(x) x["startDate"])), value=unlist(sapply(xml_dataSel, function(x) x["value"])) )

And the data looks like this:

# unit day hour start value # 1 count 2015-02-18 18 2015-02-18 18:50:32 +0100 7 # 2 count 2015-02-18 18 2015-02-18 18:50:35 +0100 4 # 3 count 2015-02-18 18 2015-02-18 18:50:37 +0100 3 # 4 count 2015-02-18 18 2015-02-18 18:50:40 +0100 4

Each row corresponds to number of steps per few minutes. For us it will be much easier to work with daily aggregates.

So some dplyr trick and we will have number of steps per day

dataDF <- xml_dataSelDF %>% group_by(day) %>% summarise(sum = sum(as.numeric(as.character(value)))) > head(dataDF) # Source: local data frame [6 x 2] # # day sum # (fctr) (dbl) # 1 2015-02-18 90 # 2 2015-02-19 10683 # 3 2015-02-20 5465 # 4 2015-02-21 4212 # 5 2015-02-22 2085 # 6 2015-02-23 7199

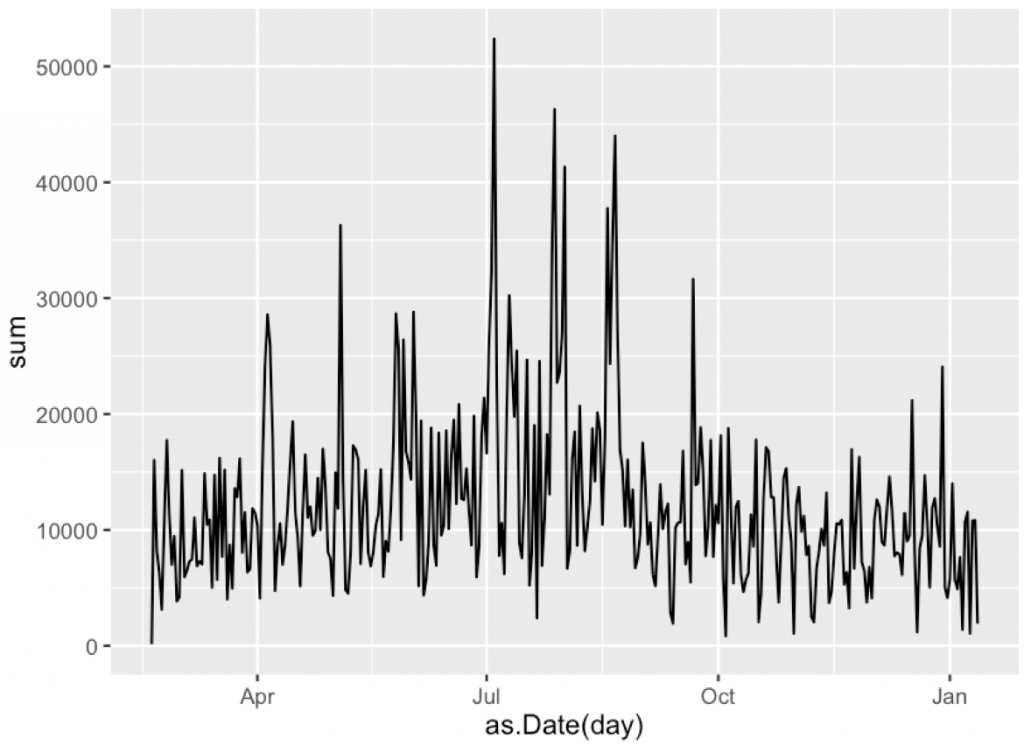

Let’s see how this profile looks like

ggplot(dataDF, aes(x=as.Date(day), y=sum)) + geom_line()

A very nice time series!

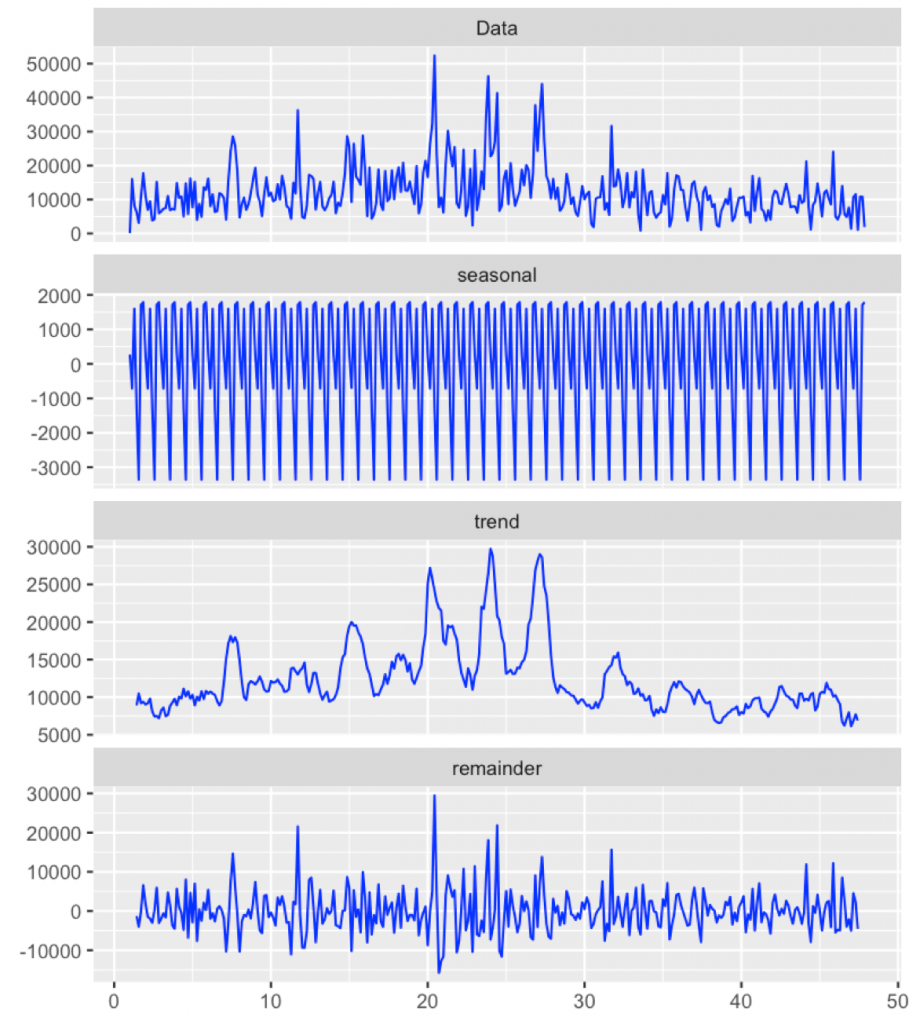

So it’s time for some decomposition.

There is probably some periodicity related to weeks (I have different number of teaching hours in different days, for sure this will have some impact).

Let’s decompose the series into a seasonal component, trend and residuals.

library(ggfortify) ds <- decompose(tsDF) ds$time <- as.Date(as.character(dataDF$day)) autoplot(ds, ts.colour = 'blue')

Strong seasonal component. We will dig it deeper next time. The trend is around 10000 steps, not that bad.

Weeks between 20 and 25 are summer holidays. These larger bumps correspond to some hiking, sightseeing etc. Then around week 30 the winter semester has started. Teaching after longer break. This was not an active semester. Actually the November was a disaster in terms of activity. So you know my plans for the new year.

Next time I will show some more detailed exploration.