Table of contents

Table of contents

February came with the groundbreaking event of building a new state-of-the-art natural language processing neural network. And then the unthinkable happened.

Machine learning has already shown that it is a world-transforming technology used in various appliances, including drug discovery, saving endangered species and designing software for sophisticated prosthetic legs. Therefore it is natural that this should spark debates, both ethical and business-related. What reaction has there been to this in February?

While in image processing artificial intelligence models outperform humans in most tasks, natural language processing (NLP) is still a challenge for machines to master. Although AI-based services are already usable with Google Translate being the pinnacle of today’s achievements, the texts produced by machines is easy to recognize by humans. But this could change soon with the latest state-of-the-art from OpenAI.

They designed a model called GPT-2 and prepared a dataset containing 8 million web pages for training. The network objective is to predict the next word given all the words to that point, which is the simplest way of doing unsupervised learning in NLP. The main improvements, in this case, are scaling up the model size (1.5B parameters) and training it on the gargantuan database of Internet text (40GB) at an unprecedented scale (32 x TPUv3).

The resulting network analyzes the starting word of a sentence and then it adds the next words to create a text based on “the most probable output”. The effect is surprisingly accurate. The model is able to recognize the type of the starting text. If the first sentence is a press title it will produce a legitimate-sounding short news article. It is also good at adopting various styles – something unimaginable for previously sturdy models which could produce problematic sentences.

Example:

Human written:

While in image processing artificial intelligence models outperform humans in most tasks, natural language processing (NLP) is still a challenge for machines to master. Although AI-based services are already usable with Google Translate being the pinnacle of today’s achievements, the texts produced by machines is easy to recognize by humans. But this could change soon with the latest state-of-the-art from OpenAI.

They designed a model called GPT-2 and prepared a dataset containing 8 million web pages for training. The network objective is to predict the next word given all the words to that point, which is the simplest way of doing unsupervised learning in NLP. The main improvements, in this case, are scaling up the model size (1.5B parameters) and training it on the gargantuan database of Internet text (40GB) at an unprecedented scale (32 x TPUv3).

The resulting network analyzes the starting word of a sentence and then it adds the next words to create a text based on “the most probable output”. The effect is surprisingly accurate. The model is able to recognize the type of the starting text. If the first sentence is a press title it will produce a legitimate-sounding short news article. It is also good at adopting various styles – something unimaginable for previously sturdy models which could produce problematic sentences.

Example:

Human written:

As in every aspect of life, the devil is in the details. The website is a great tool to spot the weakest parts of generated faces.

As in every aspect of life, the devil is in the details. The website is a great tool to spot the weakest parts of generated faces.

1. Open-AI designed a new gold standard for natural language processing…

While in image processing artificial intelligence models outperform humans in most tasks, natural language processing (NLP) is still a challenge for machines to master. Although AI-based services are already usable with Google Translate being the pinnacle of today’s achievements, the texts produced by machines is easy to recognize by humans. But this could change soon with the latest state-of-the-art from OpenAI.

They designed a model called GPT-2 and prepared a dataset containing 8 million web pages for training. The network objective is to predict the next word given all the words to that point, which is the simplest way of doing unsupervised learning in NLP. The main improvements, in this case, are scaling up the model size (1.5B parameters) and training it on the gargantuan database of Internet text (40GB) at an unprecedented scale (32 x TPUv3).

The resulting network analyzes the starting word of a sentence and then it adds the next words to create a text based on “the most probable output”. The effect is surprisingly accurate. The model is able to recognize the type of the starting text. If the first sentence is a press title it will produce a legitimate-sounding short news article. It is also good at adopting various styles – something unimaginable for previously sturdy models which could produce problematic sentences.

Example:

Human written:

While in image processing artificial intelligence models outperform humans in most tasks, natural language processing (NLP) is still a challenge for machines to master. Although AI-based services are already usable with Google Translate being the pinnacle of today’s achievements, the texts produced by machines is easy to recognize by humans. But this could change soon with the latest state-of-the-art from OpenAI.

They designed a model called GPT-2 and prepared a dataset containing 8 million web pages for training. The network objective is to predict the next word given all the words to that point, which is the simplest way of doing unsupervised learning in NLP. The main improvements, in this case, are scaling up the model size (1.5B parameters) and training it on the gargantuan database of Internet text (40GB) at an unprecedented scale (32 x TPUv3).

The resulting network analyzes the starting word of a sentence and then it adds the next words to create a text based on “the most probable output”. The effect is surprisingly accurate. The model is able to recognize the type of the starting text. If the first sentence is a press title it will produce a legitimate-sounding short news article. It is also good at adopting various styles – something unimaginable for previously sturdy models which could produce problematic sentences.

Example:

Human written:

In a shocking finding, scientist discovered a herd of unicorns living in a remote, previously unexplored valley, in the Andes Mountains. Even more surprising to the researchers was the fact that the unicorns spoke perfect English.Model completion (machine-written, 10 TRIES)

The scientist named the population, after their distinctive horn, Ovid’s Unicorn. These four-horned, silver-white unicorns were previously unknown to science. Now, after almost two centuries, the mystery of what sparked this odd phenomenon is finally solved. Dr. Jorge Pérez, an evolutionary biologist from the University of La Paz, and several companions, were exploring the Andes Mountains when they found a small valley, with no other animals or humans. Pérez noticed that the valley had what appeared to be a natural fountain, surrounded by two peaks of rock and silver snow. Pérez and the others then ventured further into the valley. “By the time we reached the top of one peak, the water looked blue, with some crystals on top,” said Pérez. Pérez and his friends were astonished to see the unicorn herd. These creatures could be seen from the air without having to move too much to see them – they were so close they could touch their horns. While examining these bizarre creatures the scientists discovered that the creatures also spoke some fairly regular English. Pérez stated, “We can see, for example, that they have a common ‘language,’ something like a dialect or dialectic.”More examples of AI-generated texts may be found at Open-AI blog. Moreover – the network is capable of transfer learning – it is easier to perform a slight additional training to enable the model to perform more sophisticated tasks. Transfer learning is a gateway technology for modern image recognition appliances, including art recognition and visual quality control. Why does it matter Alan Turing, the godfather of modern information technology, was heavily convinced that the ability to understand language is a key indicator of intelligence. Natural language, the emotions hidden beneath the words and all the cultural and societal contexts behind sentences are truly unique for human beings. [irp posts=”20388″ name=”AI Monthly digest #5 – AlphaStar beats human champions, robots learn to grasp and a Finnish way to make AI a commodity”] Making this part of the world approachable for machines is undoubtedly the great breakthrough and passes countless possibilities, from battling hate crime to various business appliances.

2. … and they didn’t release it to the public

With great power comes great responsibility and the events from February 2019 show it clearly. Despite best practices and established custom, Open-AI decided NOT TO release the state-of-the-art neural network to the public as an open source software. The organization did this to prevent the neural network from being used for malicious purposes, with perpetuating fake news as a top concern. The decision sparked a debate on Reddit, Twitter and other various media, where participants argued on the safety of releasing this “potentially dangerous technology” and tackling the established model of open-sourcing the effect of research. Researchers are afraid of building the doorway for “deepfakes for texts”. Why does it matter AI ethics and responsibility was highlighted as one of the major AI trends 2019. The situation where researchers choose safety over progress is unusual. On the other hand, fake news is considered one of the major threats of the future and was recognized one of the most dangerous online activities in 2018 by the World Economic Forum. With the rise of autonomous cars and IoT revolution building a frame for sophisticated AI-powered solutions to work, such dilemmas may get increasingly common.3. China’s AI strategy at a glance (or even more)

In the last AI monthly digest, we covered the AI strategy forged in Finland, where the national pursuit for the AI appliances grew from a grassroots movement. In contrast, China has a centralized strategy to make the country AI-giant. The Center for a New American Security (CNAS) has published a report in which they provide deeper insights into understanding China’s AI strategy. The report is a long read written by Gregory C. Allen who had a chance to meet a few times with high-ranking Chinese officials on conferences focusing on Artificial Intelligence. Topics covered in the text:- Chinese views on importance and security of AI

- Strengths and weaknesses of China’s AI Ecosystem

- China’s short-term goals in AI

- The role of semiconductors

4. Introducing Paperswithcode

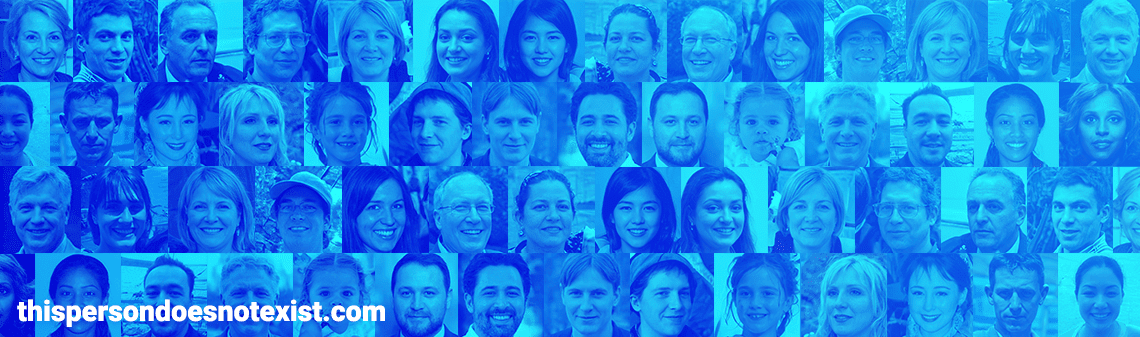

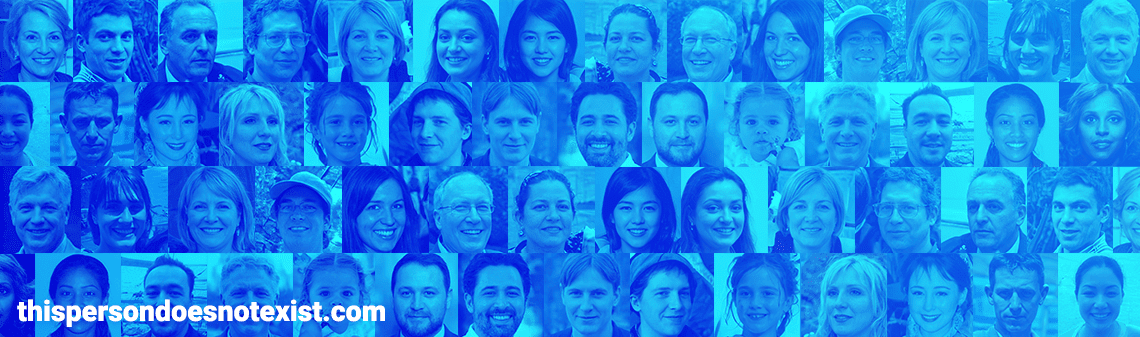

Staying updated on the latest developments in machine learning is not an easy task, especially considering the fact that not all the researchers decide to make their code available. Releasing the code is a good practice, but providing only the paper that “describes the matter enough” to reproduce the effect is also correct. [irp posts=”20053″ name=”AI Monthly digest #4 – artificial intelligence and music, a new GAN standard and fighting depression”] Using the paperswithcode makes the process of paper-and-code matching much easier, effectively saving a lot of time for people willing to broaden their knowledge. Why does it matter The portal itself is a handy tool to gain knowledge and, a bit unwillingly promotes the constituted approach of making the research code public. If it gains notoriety, it may be a tool of social pressure to publish code with the research.5. Popularity of thispersondoesnotexist.com

The neural networks’ ability to create convincing human faces is one of the benchmarks for modern AI appliances. To make the images available for the public, the StyleGan-based model publishes random face on thispersondoesnotexist.com website. As in every aspect of life, the devil is in the details. The website is a great tool to spot the weakest parts of generated faces.

As in every aspect of life, the devil is in the details. The website is a great tool to spot the weakest parts of generated faces.

- Hair – it is common to place some hair in the air, with no attachment to other or floating in an unnatural way. Sometimes the skin around hair is “sticking” to them, creating some kind of scars that are disturbing when seen. The model sometimes messes up hairstyles, mixing dreadlocks with straight and curly hair, but it is more challenging to spot

- Teeth – algorithms tend to mess up with teeth, placing them unnaturally, sometimes merged or blurred.

- Glasses – it is common that the eyes inside the glasses do not fit the rest of the depicted person. Sometimes it means placing eyes of an old woman, surrounded with wrinkles, on a child’s face.