With your head in the clouds – how to harness the power of artificial intelligence

To fully leverage the potential of artificial intelligence in business, access to computing power is key. That doesn’t mean, however, that supercomputers are necessary for everyone to take advantage of the AI revolution.

In January 2019 the world learned of the AI model known as AlphaStar, which had vanquished the world’s reigning Starcraft II champion. Training the model by having it square off against a human would have required around 400 years of non-stop play. But thanks to enormous computing power and some neural networks, AlphaStar’s creators needed a mere two weeks.

AlphaStar and similar models of artificial intelligence can be compared to a hypothetical chess player that achieves a superhuman level by playing millions of opponents—a number humans, due to our biological limitations, cannot endeavor to meet. So that they need not spend years training their models, programmers provide them access to thousands of chessboards and allow them to play huge numbers of games at the same time. This parallelization can be achieved by properly building the architecture of the solution on a supercomputer, but it can also be much simpler: by ordering all these chess boards as instances (machines) in the cloud.

Building such models comes with a price tag few can afford as easily as do Google-owned AlphaStar and AlphaGo. And given these high-profile experiments, it should come as no surprise that the business world still believes technological experiments require specialized infrastructure and are expensive. Sadly, this perception extends to AI solutions.

A study done by McKinsey and Company—“AI adoption advances, but foundational barriers remain”—brings us the practical confirmation of this thesis. 25% of the companies McKinsey surveyed indicated that a lack of adequate infrastructure was a key obstacle to developing AI in their own company. Only the lack of an AI strategy and of people and support in the organization were bigger obstacles – that is, fundamental themes preceding any infrastructure discussions.

So how does one reap the benefits of artificial intelligence and machine learning without having to run multi-million dollar projects? There are three ways.

- Precisely determine the scope of your AI experiments. A precise selection of business areas to test models and clearly defined success rates is necessary. By scaling down the case being tested to a minimum, it is possible to see how a solution works while avoiding excessive hardware requirements.

- Use mainly cloud resources, which generate costs only when used and, thanks to economies of scale, keep prices down.

- Create a simplified model using cloud resources, thus combining the advantages of the first two approaches.

Feasibility study – even on a laptop

Even the most complicated problem can be tackled by reducing it to a feasibility study, the maximum simplification of all elements and assumptions. Limiting unnecessary complications and variables will remove the need for supercomputers to perform the calculations – even a decent laptop will get the job done.

An example of a challenge

In a bid to boost its cross-sell/up-sell efforts, a company that sells a wide variety of products in a range of channels needs an engine that recommends to customers its most interesting products.

A sensible approach to this problem would be to first identify a specific business area or line, analyze the products then select the distribution channel. The channel should provide easy access to structured data. The ideal candidate here is online/e-commerce businesses, which could limit the group of customers to a specific segment or location and start building and testing models on the resulting database.

Such an approach would enable the company to avoid the gathering, ordering, standardizing and processing huge amounts of data that complicate the process and account for most of the infrastructure costs. But preparing a prototype of the model makes it possible to efficiently enter the testing and verification phase of the solution’s operation.

So narrowed down, the problem can be solved by even a single date scientist equipped with a good laptop. Another practical application of this approach comes via Uber. Instead of creating a centralized analysis and data science department, the company hired a machine learning specialist for each of its teams. This led to small adjustments being made to automate and speed up daily work in all of its departments. AI is perceived here not as another corporate-level project, but as a useful tool employees turn to every day.

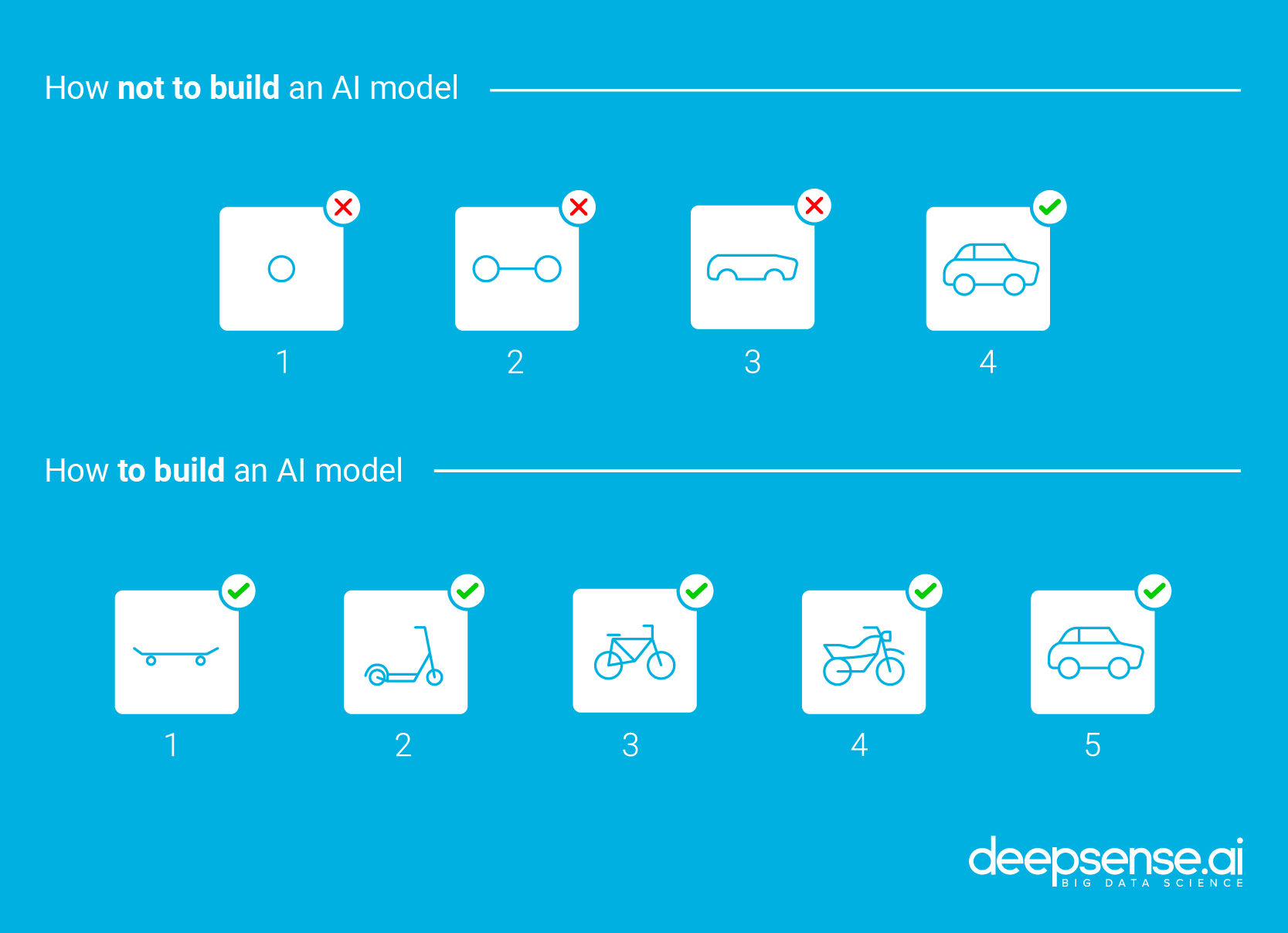

This approach fits into the widely used (and for good reason) agile paradigm, which aims to create value iteratively by collecting feedback as quickly as possible and include it in the ‘agile’ product construction process (see the graphic below).

When is power really necessary?

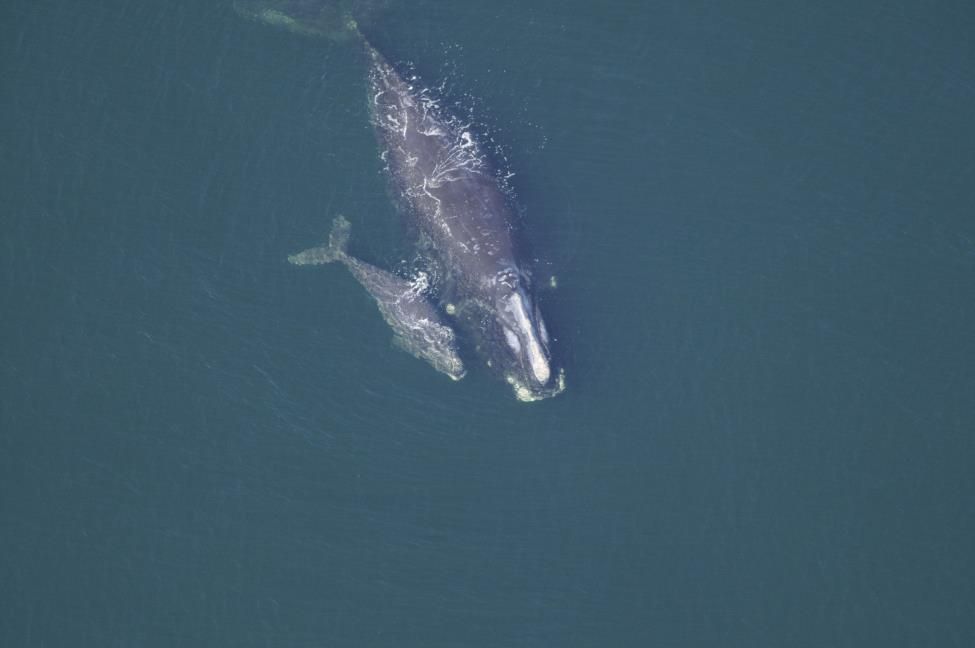

Higher computing power is required in the construction of more complex solutions whose tasks go far beyond the standard and which will be used on a much larger scale. The nature of the data being processed can pose yet another obstacle, as the model deepsense.ai developed for the National Oceanic and Atmospheric Administration (NOAA) will illustrate.

The company was tasked by the NOAA with recognizing particular Atlantic Right whales in aerial photographs. With only 450 individual whales left, the species is on the brink of extinction, and only by tracking the fate of each animal separately is it possible to protect them and provide timely help to injured whales.

Is that Thomas and Maddie in the picture?

The solution called for three neural networks that would perform different tasks consecutively – finding the whale’s head in the photo, cropping and framing the photo, and recognizing exactly which individual was shown.

These tasks required surgical precision and consideration of all of the data available—as you’d rightly image—from a very limited number of photos. Such demands complicated the models and required considerable computing power. The solution ultimately automated the process of identifying individuals swimming throughout the high seas, limiting the human work time from a few hours spent poring over the catalog of whale photos to about thirty minutes for checking the results the model returned.

Reinforcement learning (RL) is another demanding category of AI. RL models are taught through interactions with their environment and a set of punishments and rewards. This means that neural networks can be trained to solve open problems and operate in variable, unpredictable environments based on pre-established principles, the best example being controlling an autonomous car.

In addition to the power needed to train the model, autonomous cars require a simulated environment to be run in, and creating that is often even more demanding than the neural network itself. Researchers from deepsense.ai and Google Brain are working on solving this problem through artificial imagination, and the results are more than promising.

Travel in the cloud

Each of the above examples requires access to large-scale computing power. When the demand for such power jumps, cloud computing holds the answer. The cloud offers three essential benefits in supporting the development of machine learning models:

- A pay-per-use model keeps costs low and removes barriers to entry – with a pay-per-use model, as the name suggests, the user pays only for resources used, thus driving down costs significantly. Cloud is estimated to be as much as 30% cheaper to implement and maintain than an ownership infrastructure. After all, it removes the need to invest in equipment that can handle complicated calculations. Instead, you pay only for a ‘training session’ for a neural network. After completing the calculations, the network is ready for operation. Running machine learning-based solutions isn’t all that demanding. Consider, for example, that AI is used in mobile phones to adjust technical settings and make photos the best they can be. With support for AI models, you can even handle a processor on your phone.

- Easy development and expansion opportunities – transferring company resources to the cloud brings a wide range of complementary products within reach. Cloud computing makes it possible to easily share resources with external platforms, and thus even more efficiently use the available data through the visualization and in-depth analysis those platforms offer. According to a RightScale report, State of the Cloud 2018, as an organization’s use of the cloud matures, its interest in additional services grows. Among enterprises describing themselves as cloud computing “beginners,” only 18 percent are interested in additional services. The number jumps to 40 percent among the “advanced” cohort. Whatever a company’s level of interest, there is a wide range of solutions supporting machine learning development awaiting them – from simple processor and memory hiring to platforms and ready-made components for the automatic construction of popular solutions.

- Permanent access to the best solutions – by purchasing specific equipment, a company will be associated with a particular set of technologies. Companies looking to develop machine learning may need to purchase special GPU-based computers to efficiently handle the calculations. At the same time, equipment is constantly being developed, which may mean that investment in a specific infrastructure is not the optimal choice. For example, Google’s Tensor Processing Unit (TPU) may be a better choice than a GPU for machine learning applications. Cloud computing allows every opportunity to employ the latest available technologies – both in hardware and software.

Risk in the skies?

The difficulties that have put a question mark over migrating to the cloud are worth elaborating here. In addition to the organizational maturity mentioned earlier and the fear of high costs, the biggest problem is that some industries and jurisdictions may prevent full control over one’s own data. Not all data can be freely sent (even for a moment) to foreign data centers. Mixed solutions that simultaneously use both cloud and the organization’s own infrastructure are useful here. Data are divided into those that must remain in the company and those that can be sent to the cloud.

In Poland, this problem will be addressed by the recently appointed Operator Chmury Krajowej (National Cloud Operator), which will offer cloud computing services fully implemented in data centers located in Poland. This could well convince some industries and the public sector to open up to the cloud.

Matching solutions

Concerns about the lack of adequate computational resources a company needs if it wants to use machine learning are in most cases unjustified. Many models can be built using ordinary commodity hardware that is already in the enterprise. And when a system is to be built that calls for long-term training of a model and large volumes of data, the power and infrastructure needed can be easily obtained from the cloud.

Machine learning is currently one of the hottest business trends. And for good reason. Easy access to the cloud democratizes computing power and thus also access to AI – small companies can use the enormous resources available every bit as quickly as the market’s behemoths. Essentially, there’s a feedback loop between cloud computing and machine learning that cannot be ignored.

The article was originally published in Harvard Business Review.