Table of contents

Computer vision enables machines to perform once-unimaginable tasks like diagnosing diabetic retinopathy as accurately as a trained physician or supporting engineers by automating their daily work.

Table of contents

Recent advances in computer vision are providing data scientists with tools to automate an ever-wider range of tasks. Yet companies sometimes don’t know how best to employ machine learning in their particular niche. The most common problem is understanding how a machine learning model will perform its task differently than a human would.

What is computer vision?

Computer vision is an interdisciplinary field that enables computers to understand, process and analyze images. The algorithms it uses can process both videos and static images. Practitioners strive to deliver a computer version of human sight while reaping the benefits of automation and digitization. Sub-disciplines of computer vision include object recognition, anomaly detection, and image restoration. While modern computer vision systems rely first and foremost on machine learning, there are also trigger-based solutions for performing simple tasks.

The following case studies show computer vision in action.

5 popular computer vision applications

1. Diagnosing diabetic retinopathy

Diagnosing diabetic retinopathy usually takes a skilled ophthalmologist. With obesity on the rise globally, so too is the threat of diabetes. As the World Bank indicates, obesity is a threat to world development – among Latin America’s countries only Haiti has an average adult Body Mass Index reading below 25 (the upper limit of the healthy weight range). With rising obesity comes a higher risk of diabetes – it is believed that obesity comes with 80-85% risk of developing type 2 diabetes. This results in a skyrocketing need for proper diagnostics.

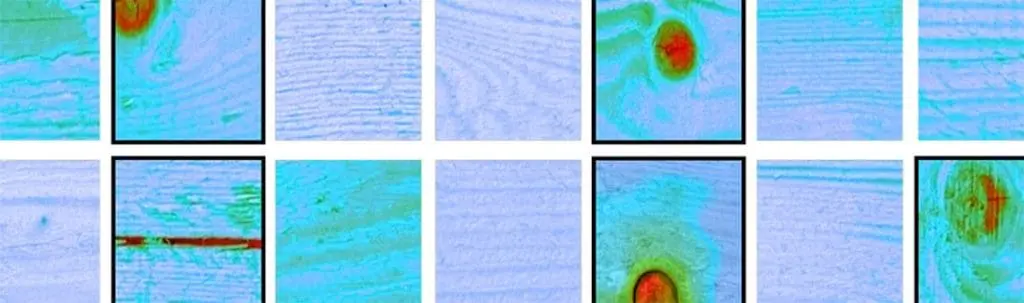

What is the difference between these two images?

The one on the left has no signs of diabetic retinopathy, while the other one has severe signs of it.

By applying algorithms to analyze digital images of the retina, deepsense.ai delivered a system that diagnosed diabetic retinopathy with the accuracy of a trained human expert. The key was in training the model on a large dataset of healthy and non-healthy retinas.

2. AI movie restoration

The algorithms trained to find the difference between healthy and diseased retinas are equally capable of spotting blemishes on old movies and making the classics shine again.

Recorded on a celluloid film, old movies are endangered by two factors – the fading technology of reading tapes that enable users to watch them and the nature of the tape, which degenerates with age. Moreover, the process of digitizing the movie is no guarantee of flawlessness, as the recorded film comes with multiple new damages.

https://www.facebook.com/deepsenseai/videos/355199718485959/?t=8

However, when trained on two versions of a movie – one with digital noise and one that is perfect – the model learns to spot the disturbances and remove them during the AI movie restoration process.

3. Digitizing industrial installation documentation

Another example of the push towards digitization comes via industrial installation documentation. Like films, this documentation is riddled with inconsistencies in the symbols used, which can get lost in the myriad of lines and other writing that ends up in the documentation–and must be made sense of by humans. Digitizing industrial documentation that takes a skilled engineer up to ten hours of painstaking work can be reduced to a mere 30 minutes thanks to machine learning.

4. Building digital maps from satellite images

Despite their seeming similarities, satellite images and fully-functional maps that deliver actionable information are two different things. The differences are never as clear as during a natural disaster such as a flood or hurricane, which can quickly if temporarily, render maps irrelevant.

deepsense.ai has also used image recognition technology to develop a solution that instantly turns satellite images into maps, replete with roads, buildings, trees and the countless obstacles that emerge during a crisis situation. The model architecture we used to create the maps is similar to those used to diagnose diabetic retinopathy or restore movies.

Check out the demo:

5. Aerial image recognition

Computer vision techniques can work as well on aerial images as they do on satellite images. deepsense.ai delivered a computer vision system that supports the US NOAA in recognizing individual North Atlantic Right whales from aerial images.

With only about 411 whales alive, the species is highly endangered, so it is crucial that each individual be recognizable so its well-being can be reliably tracked. Before deepsense.ai delivered its AI-based system, identification was handled manually using a catalog of the whales. Tracking whales from aircraft above the ocean is monumentally difficult as the whales dive and rise to the surface, the telltale patterns on their heads obscured by rough seas and other forces of nature.

Bounding box produced by the head localizer

These obstacles made the process both time-consuming and prone to error. deepsense.ai delivered an aerial image recognition solution that improves identification accuracy and takes a mere 2% of the time the NOAA once spent on manual tracking.

The deepsense.ai takeaway

As the above examples show, computer vision is today an essential component of numerous AI-based software development solutions. When combined with natural language processing, it can be used to read the ingredients from product labels and automatically sort them into categories. Alongside reinforcement learning, computer vision powers today’s groundbreaking autonomous vehicles. It can also support demand forecasting and function as a part of an end-to-end machine learning manufacturing support system.

The key difference between human vision and computer vision is the domain of knowledge behind data processing. Machines find no difference in the type of image data they process, be it images of retinas, satellite images or documentation – the key is in providing enough training data to allow the model to spot if a given case fits the pattern. The domain is usually irrelevant.